AI-generated video looks like magic—type a prompt, and a cinematic clip appears. But behind that simplicity lies one of the most expensive processes in modern AI. Compared to text or image generation, video creation demands significantly more computing power, suffers from higher failure rates, and still struggles to scale for real-world applications. While short-form content like TikTok videos can tolerate imperfections, high-quality, production-level AI video remains costly and inefficient. This article breaks down exactly why AI video generation is so expensive—and why that may not change as quickly as many expect.

1. The Massive Jump from Text and Images to Video

1.1 Text Generation: The Cheapest AI Modality

Text generation is relatively lightweight. A language model predicts one token at a time, building sentences sequentially. Even large models can generate paragraphs in milliseconds with relatively low compute cost per request.

- Low data complexity (1D sequence)

- Minimal spatial reasoning

- No rendering required

This makes text the most scalable and cost-efficient form of generative AI.

1.2 Image Generation: A Step Up in Complexity

Image generation introduces spatial structure. Models must generate millions of pixels simultaneously while maintaining coherence in lighting, texture, and composition.

- 2D data (height × width)

- Diffusion steps required

- Moderate GPU usage

Although more expensive than text, image generation has become relatively efficient due to optimization and hardware acceleration.

1.3 Video Generation: The Cost Multiplier

Video adds the dimension of time, turning the problem into 3D data (height × width × time). Instead of generating one image, the model must generate dozens or hundreds of frames—each consistent with the last.

- Temporal consistency required

- Motion prediction across frames

- Exponential increase in compute

A 5-second video at 24 frames per second requires 120 images—each one generated and aligned. This alone multiplies cost dramatically.

2. Computing Power: Why Video Is So Expensive

2.1 Diffusion Over Time

Most video models rely on diffusion processes. For each frame:

- Noise is gradually refined into an image

- Multiple denoising steps are required

- This process repeats across all frames

Even with optimizations, generating a short clip may require thousands of GPU operations.

2.2 Memory and GPU Constraints

Video models must hold multiple frames in memory simultaneously to maintain temporal consistency. This creates significant GPU pressure:

- High VRAM requirements

- Limited batch processing

- Slower inference speed

Compared to text or images, video models often require high-end GPUs or clusters, making them expensive to run at scale.

2.3 Training Cost Explosion

Training video models is even more expensive than inference:

- Massive video datasets required

- Longer training times

- Higher energy consumption

Unlike text (which is abundant and structured), high-quality video data is harder to collect, clean, and label.

3. The Hidden Cost: High Failure Rates

3.1 One Prompt, Many Attempts

Unlike text generation, AI video often requires multiple attempts to get a usable result. Creators typically:

- Run the same prompt multiple times

- Adjust parameters and regenerate

- Discard unusable outputs

Each failed attempt still consumes full compute resources.

3.2 Motion Errors and Inconsistency

Common issues include:

- Objects changing shape mid-motion

- Incorrect physics (floating, sliding)

- Temporal flickering

These errors often make videos unusable for professional applications, increasing the effective cost per usable clip.

3.3 Low Success Rate for Complex Scenes

The more complex the scene, the higher the failure rate:

- Multiple characters interacting

- Fast motion sequences

- Precise object behavior

This makes high-end production extremely inefficient compared to traditional video workflows.

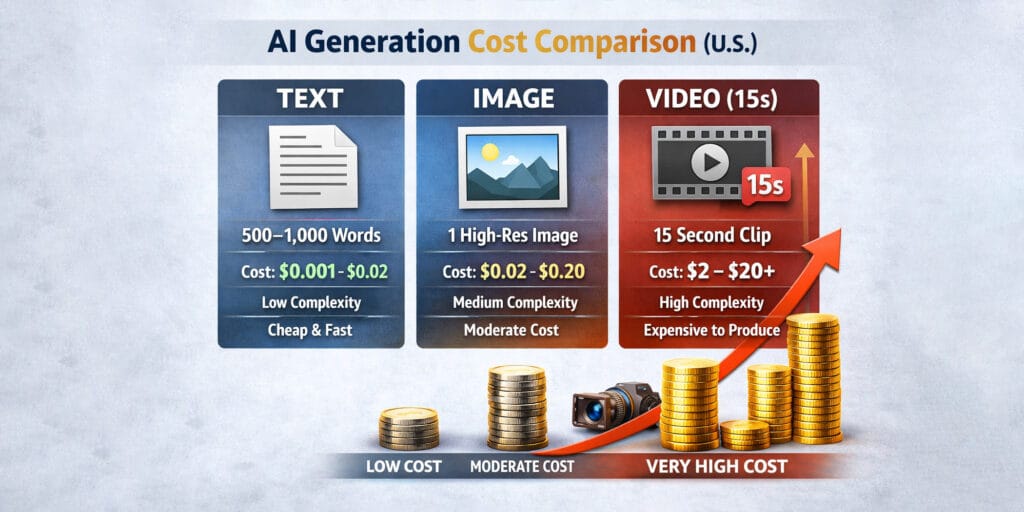

3.4 AI Generation Cost Comparison (example in the U.S.)

| Category | Typical Output | Compute Complexity | Avg Cost per Output | Notes |

|---|---|---|---|---|

| Text | 500–1,000 words | Low (1D tokens) | $0.001 – $0.02 | Extremely cheap, fast, scalable |

| Image | 1 high-res image (1024–2048px) | Medium (2D pixels + diffusion) | $0.02 – $0.20 | Stable, widely optimized |

| Video (15s) | 15 sec clip (24–30 fps) | Very High (3D: space + time) | $2 – $20+ | Huge variance, depends on retries & quality |

4. Why AI Video Is Not Yet Scalable

4.1 Cost per Output Is Still High

Even with improvements, the cost per generated video remains significantly higher than:

- Text generation

- Image generation

- Basic animation tools

This limits widespread adoption in industries where cost efficiency is critical.

4.2 Latency and Speed Issues

Video generation takes time. Unlike instant text responses:

- Rendering may take minutes per clip

- High-resolution output increases delay

- Real-time generation is still limited

This makes it impractical for many real-time applications.

4.3 Infrastructure Limitations

Running video models at scale requires:

- Large GPU clusters

- High bandwidth data pipelines

- Advanced scheduling systems

These infrastructure costs are passed on to users, keeping prices high.

5. Where AI Video Works Today

5.1 Short-Form Content (TikTok, Reels)

AI video is currently best suited for platforms where:

- Clips are short (5–15 seconds)

- Perfection is not required

- Visual impact matters more than accuracy

Small inconsistencies are often unnoticed or even accepted in fast-scrolling environments.

5.2 Concept and Prototype Videos

AI video is useful for:

- Storyboarding

- Concept visualization

- Marketing drafts

Here, speed and creativity matter more than precision.

5.3 Stylized or Abstract Content

When realism is not required, AI performs better:

- Animation-style videos

- Artistic visuals

- Music videos

This reduces the impact of physics errors.

6. Why Accuracy Still Matters

6.1 Professional Use Cases Require Precision

Industries like film, advertising, and education require:

- Consistent motion

- Accurate physics

- Reliable outputs

Current AI video models cannot yet meet these standards consistently.

6.2 Trust and Reliability Issues

High failure rates reduce trust:

- Unpredictable outputs

- Time wasted on retries

- Difficulty in controlling results

This limits adoption in mission-critical environments.

7. The Path Forward: Will Costs Go Down?

7.1 Model Optimization

Future improvements will focus on:

- Fewer diffusion steps

- More efficient architectures

- Better compression techniques

These can reduce compute requirements significantly.

7.2 Hardware Advancements

New GPU and AI accelerators will:

- Increase processing speed

- Lower energy cost per operation

- Enable real-time generation

7.3 Better Motion Understanding

Improving motion accuracy will:

- Reduce failure rates

- Increase usable output per generation

- Lower overall cost per successful video

7.4 Hybrid Workflows

Instead of full AI generation, future pipelines may combine:

- AI-generated keyframes

- Traditional animation tools

- Human editing

This hybrid approach balances cost and quality.

8. AI Video Generation Is Still Very Expensive

AI video generation is expensive because it sits at the intersection of massive computation, complex motion modeling, and high failure rates. Unlike text or images, video requires consistent storytelling across time, making it one of the hardest problems in AI. While current models can produce impressive short clips, they are still far from scalable for widespread, high-precision applications. For now, AI video thrives in short-form, low-stakes environments—but the future promises more efficient systems that will bring costs down and unlock its full potential.

For more information, visit Bel Oak Marketing.